386 kids

missing.

In just 3 days, hundreds of children vanished into crowd density at Panchkula. Standard GPS was blocked by the sheer mass of human bodies. We built an intelligent ecosystem to ensure this never happens again.

In just 3 days, hundreds of children vanished into crowd density at Panchkula. Standard GPS was blocked by the sheer mass of human bodies. We built an intelligent ecosystem to ensure this never happens again.

The issue of child safety in India's crowded public spaces is a systemic crisis. The constant movement of large populations creates scenarios where children can be separated from guardians in moments. The data from the National Crime Records Bureau (NCRB) is unambiguous.

The current system is manual, discretionary, and reactive. Police routinely delay filing FIRs despite a 2013 Supreme Court mandate. CCTV is passive. PA announcements are inaudible. Security guards are stretched thin. Every step is a potential point of failure — a chain of manual handoffs where a panicked parent's verbal report can be dismissed or downplayed.

The fundamental flaw isn't the lack of technology — it's the absence of an automated, accountable trigger. The existing process relies on human discretion at every step. A time-stamped, GPS-located digital alert sent simultaneously to the parent, venue security, and a police portal cannot be ignored. That's what we built.

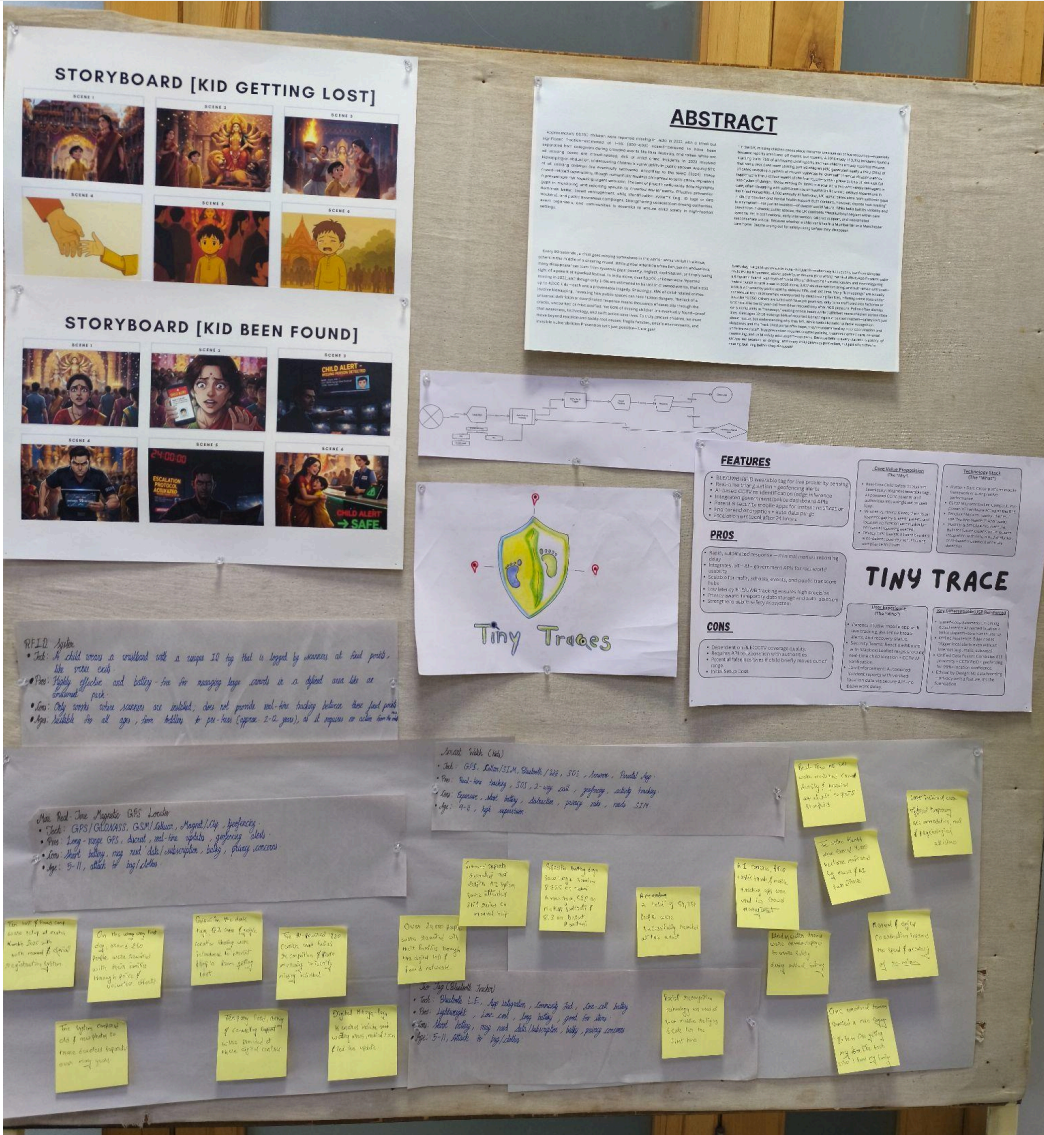

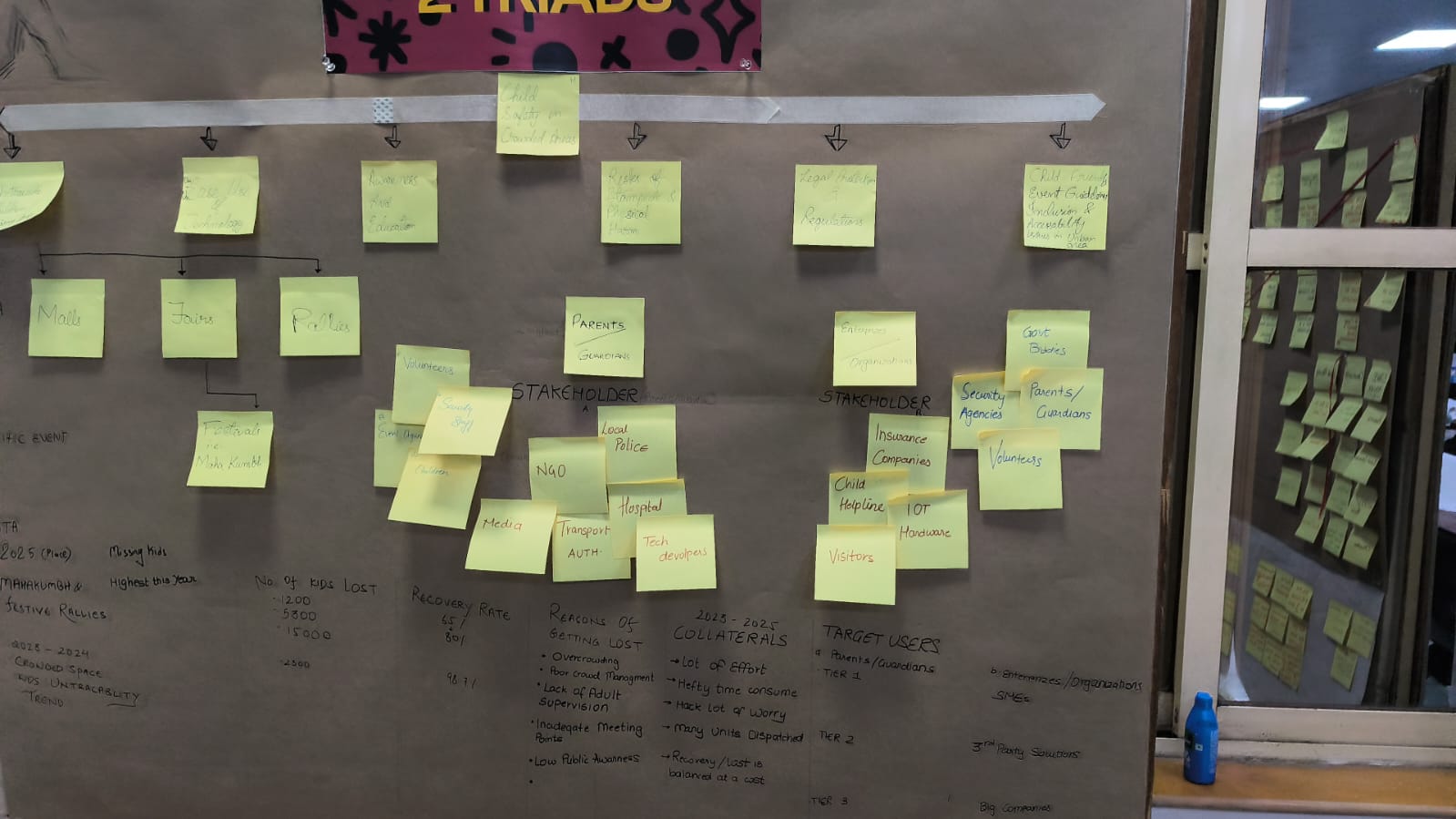

We mapped high-risk environments, analyzed cognitive behavior of lost children by age group, audited existing solutions, and identified systemic failure points — all on chart paper, sticky notes, and whiteboards before touching a screen.

We identified three tiers of high-risk zones: transit hubs (railway/bus stations) where children can be transported across state lines within hours, religious festivals (Kumbh Mela, Durga Puja) with extreme crowd density, and urban commercial centers (malls, markets) with complex multi-floor layouts.

A critical finding was the cognitive behavior gap by age: children aged 2–4 freeze and cry, 5–7 year-olds wander confidently toward remembered landmarks, and 8–10 year-olds actively avoid asking adults for help. Each behavior demanded a different system response.

I aligned the team to the child safety brief from the hackathon's problem list, set the research direction, did the deep-dive into NCRB data and NCRB-adjacent sources (Google Scholar, government SOPs), mapped stakeholders and pain points onto chart paper, and — crucially — taught the developers on the team how to think like designers: empathy mapping, problem framing, second-order effects. The entire wall of sticky notes, stakeholder maps, and data charts was built hands-on before any code was written.

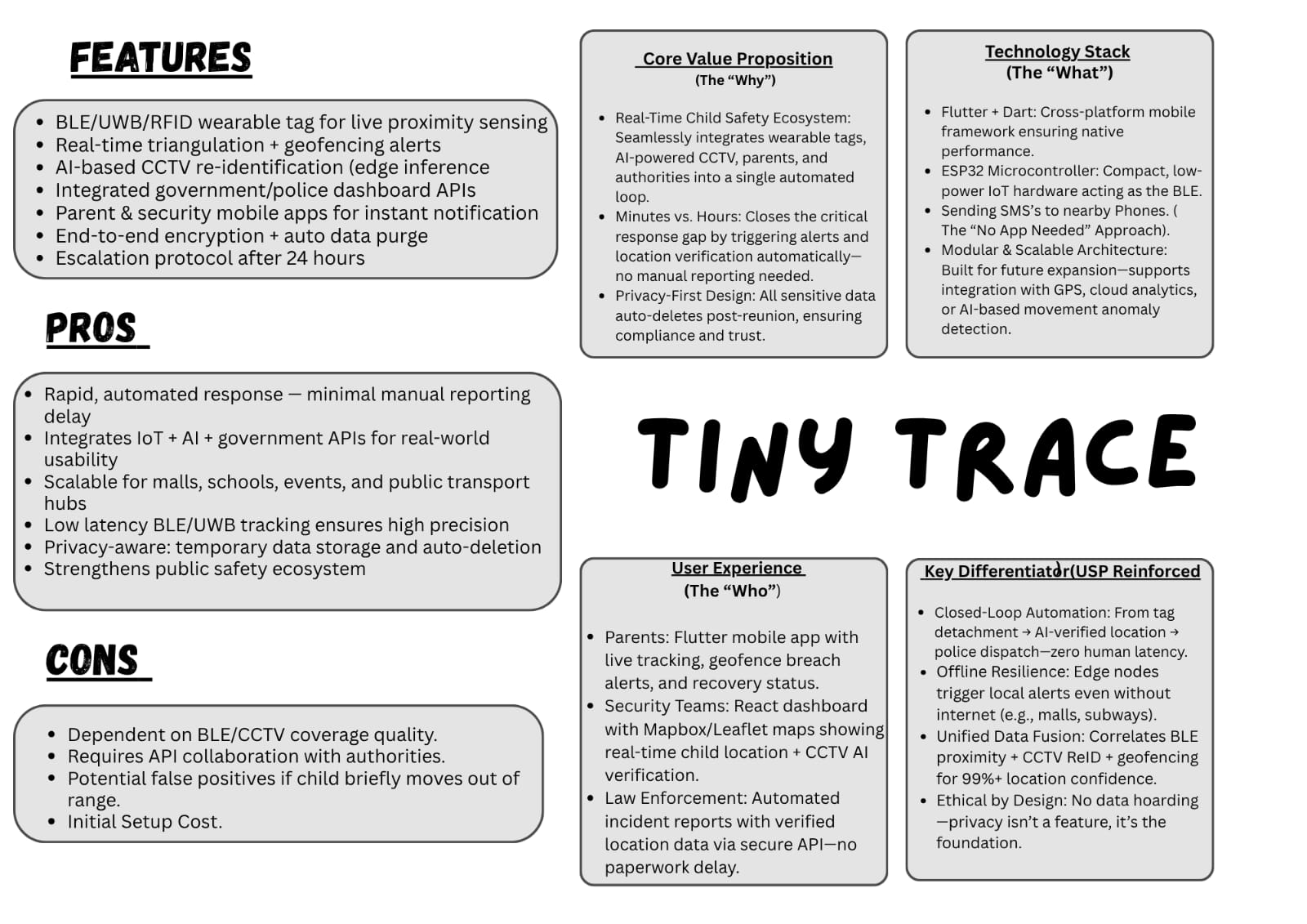

We designed a multi-tiered response system that escalates proportionally to risk — from a gentle proximity ping to a full police automation pipeline. The principle: prevent the separation from becoming a crisis.

A child's BLE wearable tag detects separation when signal strength drops below a safe threshold. Instant vibration on both the parent's phone and the child's wearable. Most incidents resolve here.

Parent activates "Active Search Mode" in the companion app. Real-time map shows child's location using hybrid GPS/Wi-Fi/BLE triangulation. Empowers the parent to move directly toward the child.

If the child isn't located, a single button dispatches an alert to the venue's security dashboard with the child's photo, name, clothing, and last-known coordinates. CCTV re-identification via YOLOv8 + DeepSORT runs on edge nodes to locate the child in live feeds.

System auto-forwards a time-stamped incident dossier to the local SJPU portal — bypassing the manual FIR delay that currently wastes the critical "golden hours." All temporary data is purged upon reunion per COPPA/GDPR-K.

Tiny Traces isn't just a wearable — it's a full-stack platform with a Flask backend, live BLE/RSSI monitoring, a data analytics dashboard, and a CCTV re-identification system. Three views, three user types, one coordinated response.

The command center. Live BLE/RSSI signal monitoring polls every 3 seconds — green for safe, red for out-of-range with a one-click "Notify Response Team" action. At-a-glance KPIs (total records, recovery rate, still missing count) and top locations by state/city. This is the screen security personnel see in real time.

The analytics layer. Built on a 10,000-record missing children dataset parsed from CSV into Pandas. Monthly trend lines, recovery time histograms, age/height/weight distributions, categorical breakdowns (gender, race, circumstance, reporter type), and geographic heatmaps — all rendered via Chart.js doughnut and bar charts. This is the screen researchers and policy teams use.

The AI verification layer. Filters by last-known state/city to surface recent incidents, then runs a YOLOv8 detection model on CCTV footage to match visual descriptors. Displays a demo video of the recognition pipeline and links cases to camera-verified locations. Human-in-the-loop confirmation before dispatch.

Note: Screenshots of the live Alerts, Insights, and CCTV interfaces are coming soon. Explore the system:

We chose absolute data sovereignty over tracking speed. All data is encrypted locally and auto-purged upon reunion. No cloud persistence. This means slightly higher latency than a cloud-first approach, but zero risk of surveillance capitalism. The system exists to find your child — not to build a profile on them.

GPS fails indoors — a hard constraint in malls and pandals. We combined BLE triangulation with UWB anchors and fused data with last-known GPS geofence exit points. It's not GPS-level precision, but it's reliable where GPS is literally blind.

No physical UWB/RFID tags were available. We simulated tag behavior using ESP32 dev kits and validated the full pipeline with mock APIs and pre-recorded CCTV footage. The system logic was proven end-to-end; the hardware is ready to scale.

I chose the brief, set the research direction, did the deep NCRB data analysis, mapped stakeholders, identified cognitive behavior patterns by age group, and charted the entire problem space onto physical boards before any digital work began.

In a mixed team of 4 designers and 3 developers, I bridged the gap — teaching developers how to think in empathy maps and problem frames, while helping designers articulate technical constraints. Every decision was made at the chart paper wall, not in Figma.

I delivered the final pitch to the jury. Opened with a hook referencing the movie Duvvada Jagannadham — "don't let your kid become like DJ" — and walked them through the system logic. The jury cited my presentation energy and the hook as standout moments.

Arduino + Arduino IDE, Visual Studio Code, Cursor, Google Docs, Google Scholar, FigJam, and most importantly — chart paper, sticky notes, and markers for the hands-on research wall.

Tiny Traces won 1st place at CTRL+CREATE — a 48-hour hackathon at Karnavati University. The jury validated the system's potential to save lives in high-density Indian infrastructure. The prize: ₹3,000 and the knowledge that a child safety ecosystem born in a hackathon could become a standard layer of protection in public spaces.